Hello again everybody, sorry for the long delay here. It’s been a busy few months! Before we get going today, I wanted to share a few quick things.

First of all, if you have not already, you can join the official State of Surveillance Discord channel here. The channel has been admittedly pretty dead as of late but I’m working to change that. If you are looking for quick access to the news and a group of thoughtful people to talk to about it, this is the place for you.

Separately, I have a podcast you can check out called The Future is Ow. I mentioned the podcast before but there are seven new episodes out already with new ones coming every two weeks. While not directly related to the newsletter, the podcast does cross some familiar territory and spends a good chunk of time talking through some thorny surveillance issues. You can subscribe to it on Apple Podcasts here!

And last but not least, if you are interested in reading any of the work I’m doing at Insider Intelligence, you can view some of my recent articles here. When I began writing there all of my content was behind a hefty paywall but now thanks to some internal changes some of my top stories will appear free to view every weekday. I’ll post some here when I think they are relevant.

Alright. With that, let’s get into it.

Today’s Big Story

The Misguided Attempts to Democratize Facial Recognition

A little over a month ago, the United States collectively tuned in to an event without comparison. A crowd of hundreds, some brandishing signs and others adorned with face paint and covered in animal pelts marched their way toward the United States capitol building.

You know the rest of the story. You’re probably aware some of these marchers made their way past guarded checkpoints with relative ease and stormed the offices of senators and Congress members. You’re probably aware that some of the most zealous invaders hung from walls under the rotunda’s long shadow and you’re also probably aware that others ran their hands along the building’s white walls, smearing them with trails of their own steaming shit. By the end of the day, at least five of those involved died, one from a bullet in the neck discharged by a capital police officer and three others apparently by clogged arteries that seemingly couldn’t handle one more shout.

I don’t think it’s too much of a stretch to posit that most people, years from now, will remember where they were when a confederacy of roughnecks and disillusioned rabble-rousers stormed the nation’s heart of government. I sat huddled in the lobby of my mother’s apartment in Texas working on an issue of this very newsletter. I remember receiving a text from my friend Jonah with a news headline displaying the words “WOMAN SHOT.” Then, I remember turning on the TV to see the smoke.

Call me an old-fashioned nationalist or whatever other descriptor you’d like, but seeing those images left me perturbed. The world had witnessed a watershed moment on live television, something still being collectively worked through. Not long after though, that moment entered a new, less discussed phase. We entered a phase of citizen revenge—revenge carried out via facial recognition.

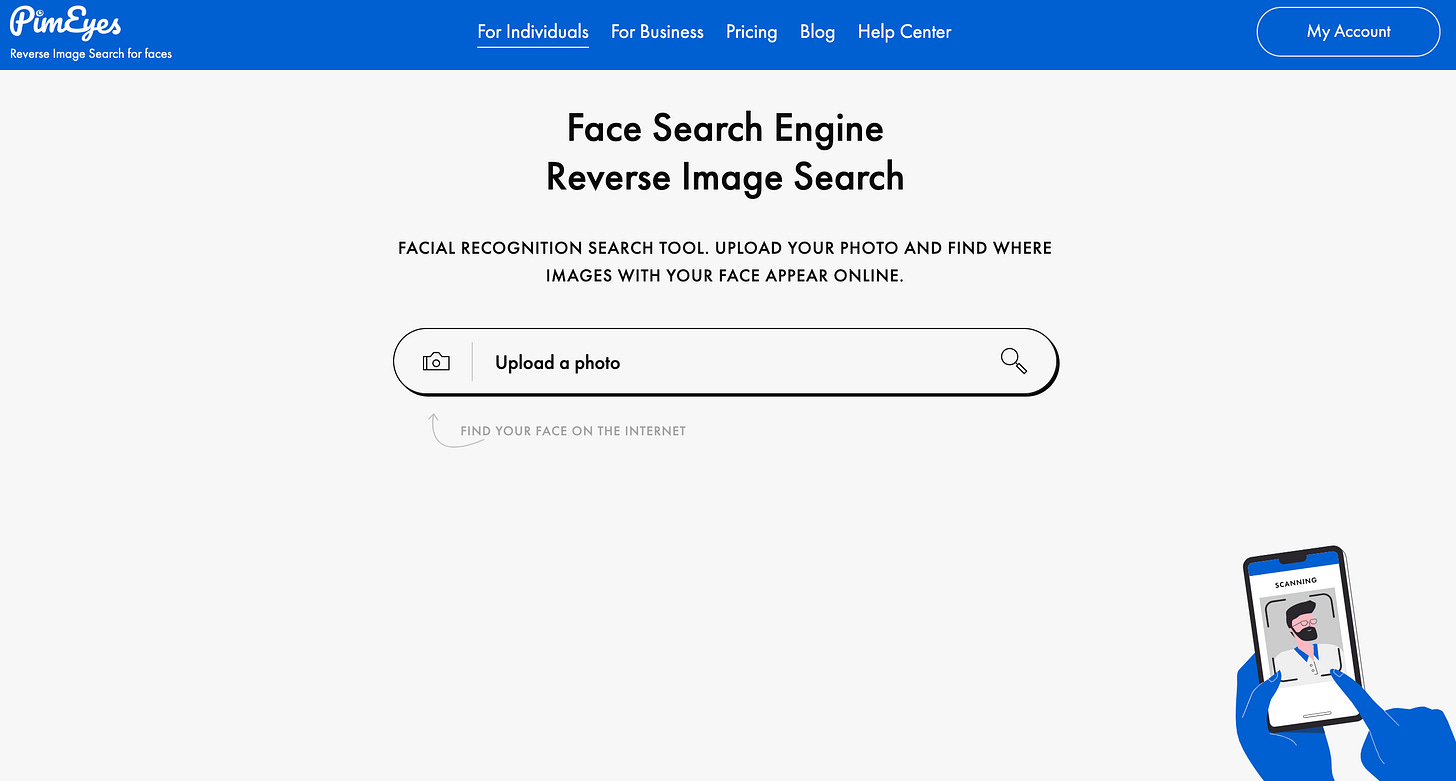

In the weeks following the capitol hill riots, eager keyboard vigilantes turned to facial recogniton tools to identify—and ultimately dox—some of those involved. One of the most popular services used to deliver judgment was Pimeyes, a service from a Polish website whose founders believe, “facial recognition should be available for everyone.”

With the service, users can submit photos to Pimeyes which then runs the images through its program. The application scours every nook and corner of the open web— be it Instagram, photos, Pinterest accounts, or anything else not siloed behind private accounts—and spits back its results.

I ran an image of my own face against the Pimeyes algorithm which turned back 122 results. Only eight of those were of me. The rest were a creepy conglomeration of doppelgangers living alternate online lives. You can look at the results for yourself here.

That same service was used against the DC rioters. In one case, an online activist frustrated by a seeming unwillingness by law enforcement to punish these rioters took a screenshot of a Youtube interview with a mustachioed rioter and fed it Pimeyes. The resulting images coalesced around one user named Bill Tyrons from Albany, New York. Local media confirmed his Tyron’s identity, after which the man’s name was promptly submitted to the FBI. Just days after the riots took palace, the FBI reportedly received more than 4,000 tips from civilians using images, videos, and facial recognition data.

Pimeyes stands out for its intuitive simplicity, but it’s far from the only example of individuals turning to facial recognition for perceived justice. Technologists ran home-made facial recognition algorithms against a cache of rioter videos posted to the now crippled far-right social media site Parler and were able to track individual faces, and in some cases, “pinpoint where a person was at specific points of time.”

Last year, activists used a similar approach to attempt to “unmask” police officers who had used excessive, sometimes barbaric, violent force when responding to Black Lives Matter protestors. Before that, dissidents in Hong Kong created their own facial recognition system officers there deemed to have brutalized pro-democracy protestors.

You can’t deny the poetic irony of all this. Law enforcement—and in the case of the DC riots, their civilian enablers—suddenly found themselves on the receiving end of the very same surveillance chimera they so willingly let loose against minorities, the poor, and the powerless, often with reckless abandon.

While you won’t catch me shedding a tear for bloodthirsty members of law enforcement or deluded DC rioters dreaming of upending democratic governance, this supposed “democratizing” of facial recognition is a dual-edged sword at best whose continued use risks further undermining the very same groups and causes its newfound sympathizers claim to cherish.

The types of facial recognition programs outlined above were once limited to the purview of the established Big Tech giants. Rapidly reducing costs and continued technological advancements though have flipped that script, and we’re just now starting to see the consequences.

Separately, eager tech start-ups are racing to become the next torchbearer of the surveillance industry. The most popular of this emerging cadre—-through infamy over esteem—is Clearview AI. If you didn’t know about Clearview profits off the exchange of unconsenting face images, you’d be forgiven for mistaking it with any other eye-roll-inducing Silicon Valley upshot. Its Australian founder, Hoan Ton-That, even comes pre-equipped with unkempt shoulder-length hair. Though I haven't seen photos of his feet, I wouldn’t be shocked if they hovered over sandals. Yet, as readers of this newsletter know, Ton-That’s company doesn’t deliver smoothies or spread cat videos but instead brings in its revenue from building out databases of billions of faces scraped from the open web, which it then swells to an estimated 2,400 police departments.

Not long ago, that type of power was reserved to the like of IBM, Microsoft, and Google, but thanks to the details mentioned above and an abundance of available user data, companies like Clearview can essentially offer cops the same services as Big Tech but cheaper and with less pesky ethical reservations.

Let’s expand on the topic of the data used for just a moment. According to a 2020 IDC report predicts the amount of data created the globe over the next three years will exceed the amount created over the past 30 years. Meanwhile, the number of actual humans posting data online is expected to increase as well. Cisco expects the total number of global internet users to increase from 3.9 billion in 2018 to 5.3 billion by 2023.

All of this means facial recognition capabilities are poised to become more common in coming years, not less. The temptation for individuals to engage with the technology for their own ideals of justice is unavoidably tempting, something University of Florida law professor Elizabeth Rowe touched upon in a recent interview.

Just because we have access to all of this information doesn't mean that we should necessarily “use it,” Rowe said, Part of the problem is that there’s no reporting accountability of who’s using what and why, especially among private companies.

In a sense, the rapid distribution of facial recognition technology mirrors the follies of gun distribution in the US. Using the second amendment as a catch-all justification, firearm proponents have pushed the US to the point where there are far more firearms than people, and with regulations remaining relatively stagnant for decades. Some on the political right argue personal gun ownership is necessary to defend themselves from armed adversaries or a tyrannical government. That logic devolves quickly and inevitably leads to the current reality where the world’s wealthiest nation has the 28th highest rate of gun deaths. By providing facial recogntion to everyone, technologist are handing out AR-15’s

With that in mind, the need for clear regulatory rules and frameworks are of paramount importance. Activists and individuals using surveillance tech to target ugly malefactors might feel a short-term jolt of satisfaction, but their actions are ultimately contributing to the same ramping up of surveillance tech many have spent years trying to reign in.

Here’s What Else is New

⛓️ A persistent prison software issue is keeping inmates in jail longer than they are supposed to be ⛓️

According to whistleblowers, there are hundreds of incarcerated people in Arizona correctional facilities there unjustly due to a software bug.

🦆 DuckDuckGo Calls Out Google Search for 'Spying' on Users After Privacy Labels Go Live 🦆

🚁 US drone startup Skydio is reportedly working with over 20 US police agencies 🚁

The move comes as tech start-up flock to fill government and military drone contracts once taken on by Chinese companies.

🇮🇳 India is reportedly trying to build out its own alternative internet 🇮🇳