Hello again everyone, I hope y’all are doing well! Not too much in ways of an update on this one. A quick reminder to check out this newsletter’s sister podcast, The Future is Ow, if you haven’t already. You can find that and subscribe here. Also, if you are so inclined, consider following my Medium account here. I usually post my longer State of Surveillance essays there in an alternate format and occasionally dip into some nonsurveillance topics there as well. And to the recent new subscribers, welcome!!

Alight, let’s dive into what’s quickly becoming one of the most divisive issues in the US.

Earlier this month, New York City (where I’m currently based) crossed into uncharted territory in the US’ fight against Covid-19 and its frustratingly determined variants. With Delta cases surging and residents quickly becoming accustomed to loosened restrictions, Mayor Bill De Blasio officially made New York the first city to require workers and customers to show proof of vaccination to enter a plethora of businesses, from indoor dining and gyms to billiards halls and strip clubs.

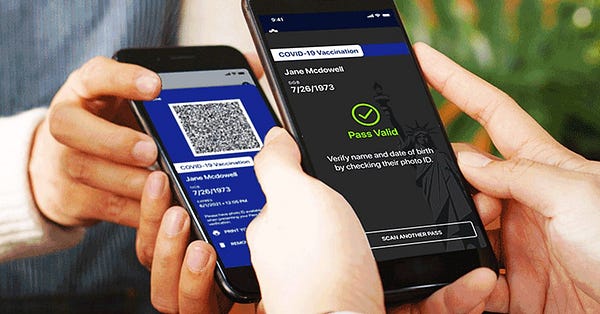

Digital vaccine passports—apps that can be scanned by businesses to verify an individual’s vaccination status—are a critical component to the city’s plan of varying the jab status for its nearly 8.4 million residents. Though New York is the first US city to issue such a mandate, others around the country are paying close attention, and many are creating their own state-specific digital passports.

Almost immediately, news of New York’s effort reignited a firestorm of debate over the privacy and society implications surrounding these still new vaccine passports. Though experts agree these passports represent one of the best bets to help safely reopen society, they also, in their present form, risk being hijacked by surveillance-friendly autocrats eager to expand their all-seeing reach.

Before we move on, it may be worth taking a moment to differentiate the (many) vaccine passports being rolled out and how they work. As of now, the U.K., France, Israel, Australia, China, and the European Union all have some form of nationalized-ish vaccine passport that’s already being used in varying degrees to allow vaccinated individuals to travel, eat out, go to bars, and engage in other “normal” activities. The US, notably, has avoided a nationalized vaccine passport, with the Biden administration saying it’s “not their role” to create or mandate one.

At times, the passports differ greatly but generally, most will collect an individual’s name, birthday, the day they received their vaccine, the type of vaccine they received, and potentially other related health data. Passports like New York’s Exclecssior say they do not track a user’s location, however, privacy experts say the scanners used to validate those passports (say at a bar or a restaurant) can collect that data, and if so inclined, could use it to track an individual.

The most likely information collected will include an individual’s name, birthdate, date of issuance, and type of vaccine received, and/or COVID-19 testing information.

As most readers have no doubt heard, the pushback to vaccine passports came about as fast as the apps themselves were rolled out. The arguments against passports vary but for the purposes of this post, I’m going to break them into two large camps: privacy and social equity.

On the privacy front, pushback to vaccine passports has largely mirrored hesitation expressed by experts to the adoption of Covid-19 contract tracing apps which I’ve outlined here in the past. In addition to worries around poeyential third-party tacking of location data, experts like Abert Fox Cahn of the Surveillance Technology Oversight Project (STOP) have expressed concern over the lack of transparency surrounding these apps.

“I have less information on how the Excelsior Pass data is used than the weather app on my phone,” Fox Cahn told MIT Technology Review earlier this year. “Because the pass is not open source, its privacy claims cannot easily be evaluated by third parties or experts.”

There’s also an issue of effectiveness. In New York City, residents have the option of choosing between the Excelsior pass or the city-specific pass. I use the city pass because it’s significantly lower-tech, but it so low tech that it’s basically useless. As Fox Cahn wrote for the New York Daily News, the NYC app amounts to “nothing more than a camera app dressed up as a health credential.”

Others, like the Electronic Frontier Foundation, warn digital vaccine passports could easily be repurposed to serve as digital identification systems that outlive the pandemic and are used by governments as a catch-all identifier. These fears, as I’ll point out later, are turning out to carry more weight than some had first expected.

Activists warn a centralized digital identification system could be used to collect more granular types of personal data, like a person’s age, healthcare status, or even criminal history. All of that data could then potentially be stored in a large catchall database that could then be used to monitor and surveil individuals in myriad ways, mirroring in ways they types of always-on surveillance already seen in China and other authoritarian regimes.

What’s more, nearly all of these vaccine passports are being developed in partnership with large tech firms who, as readers of this newsletter will know, definitely don’t have the best track record of keeping their user’s personal information secure. This issue is made worse in the US since it still lacks any meaningful federal data privacy laws placing limits on the types of information companies can gather or who they can share it with. Basically, by opting into vaccine passports, citizens are trusting tech companies to keep their world and treat sensitive data with a level of care and stewardship that they haven’t shown in other domains.

That then leads us to the social issues. Digital vaccine passports, like all technology, reflect the social inequalities apparent in society. As the ACLU and other groups have noted, digital vaccine passports require an app, which requires technology that’s disproportionately out of reach to older and lower-income communities. These disparities could risk inflaming social inequality if passports are mandated for access to bars, restaurants, stadiums, museums, or any other communal space, in effect creating a class of individuals (typically affluent and tech-savvy) who are granted relief from life’s drudgery while the rest (ironically those also disproportionately likely to be “essential” workers) cannot.

Zooming in on NYC, black and Latino resident lagged behind other groups in terms of vaccination rates and generally adopt technology at lower rates than other ethnicities nationwide. A recent Pew survey found that black Americans were slightly less likely than whites to own a smartphone and 11 percentage points less likely to have a home computer. When all this is put together, advocates worry vaccine passports could actually amplify inequality and further degrade hemorrhaging public trust in government and the medical community.

And then, there’s the conservatives. If you’ve only briefly read or listening to anything related to vaccine passports in recent months it would be understandable to assume the bulk of resistance has come from curmudgeonly conservative politicians, cracked-out conspiracy theorists, and hesitant skeptics. That’s partly true. Since vaccine passports are so closely tied with the vaccines themselves, and since conservatives (at least in the US) have shown the overwhelming resistance to vaccines, they’ve recently managed to fan the largest flames around passports.

In some ways this conservative skepticism around vaccine passports mirrors some of the more dramatic fears from more level-headed privacy advocates; namely, fear of government overreach, external infringement on individual autonomy, and of course, comparisons to China. But while the direction of some of these conservative complaints follows a similar wind as experts, the motivation are often miles apart.

Dig deep enough into conservative complaints around vaccine passports and once often finds self-interested politicians stoking the flames of vaccine misinformation running rampant among their constituencies. Among everyday people, the skepticism is often limited to the invocation of the “V” word. Aside from a handful of civil liberty-focused libertarians, most average Republicans are unlikely to draw breaths of criticism around similar surveillance tactics being used at airports and other public spaces in the infallible name of patriotism.

These, of course, are generalizations, but the finer point is that it’s indeed worth drawing some distinction between civil society’s privacy concerns over vaccine passports and those of the most extreme on the political right, less we allow any valid concerns be sucked up into a mirage of opaque, pointless partisanship.

Regardless, conservatives in the US (whether for the right reasons or not) are taking meaningful action against vaccine passports. As of June, 15 US states including Texas, Alabama, Arizona, and Florida have banned legislation banning vaccination passports wholesale.

With all of this in mind though, polling shows the public is roughly split on their attitudes towards vaccine passports, with different levels of acceptance based on circumstance. A recent May Gallup poll, for example, found that 57% of US adults favor vaccine passports for airline travel and 55% support them for large gatherings like concerts or sporting events. I’d probably count myself among those. Support drops off though when asked if people would support vaccine passport requirements for workplaces (45%) or indoor dining (40%).

It shoudn’’t be a surprise that these figures get much muddier when partisanship is taken into account. While 85% of self-identifying Democrats support vaccine passports for air travel, that figure drastically dips down to 28% for Republicans. Just 16% of Republicans support vaccine passport requirements for workplaces compared to 69% for Democrats.

I alluded to it earlier but on the privacy side of the vaccine passport debate, one of the primary concerns, written off at times as alarmist, from groups like the EFF revolve around a slippery slope argument that these passports would eventually be expanded to be used for more than just vaccine identification. You can probably already sense where this is heading but yes, there’s evidence that slide is happening, both in the US and abroad.

Back in June, a FIOA request filed by the Surveillance Technology Oversight Project revealed that IBM (the maker of New York’s Excelsior pass) had signed a three-year contract with the state of New York worth $17 million—a figure nearly seven times higher than the $2.5 million publicly disclosed. Though the Excelsior pass had originally been pitched as a limited, short-term solution, the contract revealed New York has instead asked IBM to provide a roadmap for making the app accessible for the state’s entire 20 million population. So why the discrepancy?

Around the same time, per The New York Times, IBM’s vice president of emerging business networks reportedly gave an interview where he admitted New York state was considering broadening Excelsior’s scope, with discussions underway of how the passport could be expanded to serve as a digital wallet capable of storing driver’s license information or other health data. As I write this, Excelsior pass currently allows users to upload an image of their driver’s license.

Just this week though, another FOIA request from the Surveillance Technology Oversight Project found the expected cost for the Excelsior passes had swelled yet again, this time up to $27 million. That contract alluded to potential Phase 3 that expands the data to include vaccination results from residents in New Jersey and Vermont. Meanwhile, Google Apple and Samsung have all announced plans to create features in their phones that allow users to generate QR codes with their vaccination details embedded. This comes as Apple is preparing to roll out a new feature in iOS 15 that will let users store a digital version of their driver’s license alongside their credit cards and other personal information in its Apple Wallet feature.

This all sounds relatively sanguine for now, but there are risks. Earlier this year, officials in Singapore announced they had used data gathered from its TraceTogether contact tracing app to prosecute an individual in a murder case. Officials defended the move, saying they would only use the data (which they previously said would be limited to vaccination status) in “serious” crimes. Just one month later, Singapore passed a law officially allowing law enforcement to use data gleaned from its contact tracing app to be used in criminal investigations. In most countries, this promise to limit vaccine passports lies only in the word of governments and companies. According to Top10 VPN, of the 120 contact tracing apps available in 71 countries, 19 didn’t even have a privacy policy.

In the background of all of this uncertainty is a raging pandemic that, thanks to new variants, is re-ravaging countries and threatens to stay with society, in some capacity, for many many years. With this in mind vaccine passports, in some form, will likely be an unavoidable reality for cities, states, and countries looking to resume normal operations with some modicum of sanity.

The privacy issues outlined here isn’t necessarily a plea to excise vaccine passports. On the contrary, most of the fears expressed could be addressed by a more open, transparent, and public-facing approach to app design. If vaccine passports makers commit, for example, to actively push against collecting location data, they could actually present much greater upside with less risk than previous contact tracing app efforts.

As someone living in New York City, I’ve personally downloaded an app and have already used to enter my gym. I opted to use the city app over the state’s Excelsior pass because it appears slightly more privacy-preserving, but concerns remain. As with everything else the pandemic has highlighted though, personal risk calculations are just that: personal. Though the privacy implications associated with vaccine passports are real and present they exist amid a realer and more present pandemic reality.

BMD

Share The State of Surveillance

A coalition of more than 90 civil liberties groups around the world are calling on Tim Cook to stop the rollout of Apple’s controversial CSAM scanning tool

The groups, which include the ACLU, the Center for Democracy and Technology, and others wrote they worried the tool “will be used to censor protected speech, threaten the privacy and security of people around the world, and have disastrous consequences for many children.”

"Once this capability is built into Apple products, the company and its competitors will face enormous pressure — and potentially legal requirements — from governments around the world to scan photos not just for CSAM, but also for other images a government finds objectionable," the advocates wrote.

The bottom line: Any hope Apple had that this issue would get swept away in the news cycle current looks to be quickly vanishing.

Cuba passed a new sweeping internet law that would criminalize the spreading of fake news.

The move comes just one month after the country experienced its largest anti-government protests in decades, which the country has officially blamed on outside US agitators on social media.

Cuba’s law is the latest in a growing number of countries passing restrictive legislation criminalizing online speech.

China passed one of the world’ strictest data privacy laws … but it won't apply to government surveillance

Called the Personal Information Protection Law, the law will require any individual or private company interacting with a Chinese user’s data to take steps to first obtain prior consent, and then limit data collection going forward.

Though China’s new rules may place some of the world’s strictest data restrictions on private firms, those same standards won’t apply equally to the government’s own internet activities.

That’s it for now! See y’all.